ZeroID: Open-source identity platform for autonomous AI agents

ZeroID is an open-source identity platform that implements an identity and credentialing layer specifically for autonomous agents and multi-agent systems. The attribution …

Bringing governance and visibility to machine and AI identities

In this Help Net Security interview, Archit Lohokare, CEO of AppViewX, explains how the rise of AI marked a turning point where machine and AI agent identities began …

What vibe hunting gets right about AI threat hunting, and where it breaks down

In this Help Net Security interview, Aqsa Taylor, Chief Security Evangelist, Exaforce, explains vibe hunting, an AI-driven approach to threat detection that inverts …

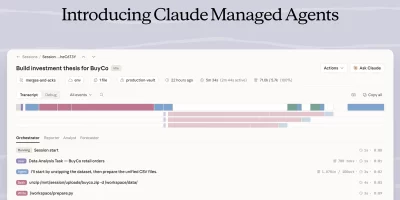

Claude Managed Agents bring execution and control to AI agent workflows

Anthropic’s Claude Managed Agents are a suite of composable APIs for building and deploying cloud-hosted agents at scale, handling sandboxed code execution, …

Meta’s Muse Spark takes AI a step closer to personal superintelligence

Meta Superintelligence Labs has introduced Muse Spark, a natively multimodal reasoning model with support for tool use, visual chain of thought, and multi-agent orchestration. …

AI agent intent is a starting point, not a security strategy

In this Help Net Security interview, Itamar Apelblat, CEO of Token Security, walks through findings from the company’s research, which shows that 65% of agentic chatbots …

Prompt injection tags along as GenAI enters daily government use

Routine use of GenAI has moved into daily operations in state and territorial government environments, placing new security risks within common workflows. A Center for …

What managing partners should ask AI vendors before signing any contract

In this Help Net Security interview, Kumar Ravi, Chief Security & Resilience Officer at TMF Group, argues that over-privileged access and weak workflow controls pose more …

6G network design puts AI at the center of spectrum, routing, and fault management

Wireless network operators are preparing for a generation of infrastructure where AI is built into the architecture from the start. Sixth-generation networks, expected to …

Anthropic’s new AI model finds and exploits zero-days across every major OS and browser

Automated vulnerability discovery tools have existed for decades, and the gap between finding a bug and building a working exploit has always slowed attackers. That gap is now …

Cybercrime losses break the $20 billion mark

Online crime continues to generate rising financial losses, with totals reaching $20.877 billion in 2025. The FBI’s Internet Crime Complaint Center (IC3) report shows a 26% …

GitHub Copilot CLI gets a second-opinion feature built on cross-model review

Coding agents make decisions in sequence: a plan is drafted, implemented, then tested. Any error introduced early compounds as subsequent steps build on the same flawed …

Featured news

Resources

Don't miss

- 88% of self-hosted GitHub servers exposed to RCE, researchers warn (CVE-2026-3854)

- Buggy Vect ransomware is effectively a data wiper, researchers find

- CISA, Microsoft warn of active exploitation of Windows Shell vulnerability (CVE-2026-32202)

- The Exchange Online security controls organizations keep getting wrong

- Identity discovery: The overlooked lever in strategic risk reduction