Google’s TurboQuant cuts AI memory use without losing accuracy

Large language models carry a persistent scaling problem. As context windows grow, the memory required to store key-value (KV) caches expands proportionally, consuming GPU memory and slowing inference. A team at Google Research has developed three compression algorithms: TurboQuant, PolarQuant, and Quantized Johnson-Lindenstrauss (QJL). All three are designed to compress those caches aggressively without degrading model output quality.

The overhead problem in vector quantization

Vector quantization has long been used to compress the high-dimensional numerical representations that AI models process. The technique reduces memory by mapping continuous values to smaller, discrete sets of numbers. The persistent limitation of conventional approaches is that they require storing quantization constants in high precision for every small block of data, adding between one and two extra bits per number. For systems already under memory pressure, that overhead offsets a significant share of the compression gains.

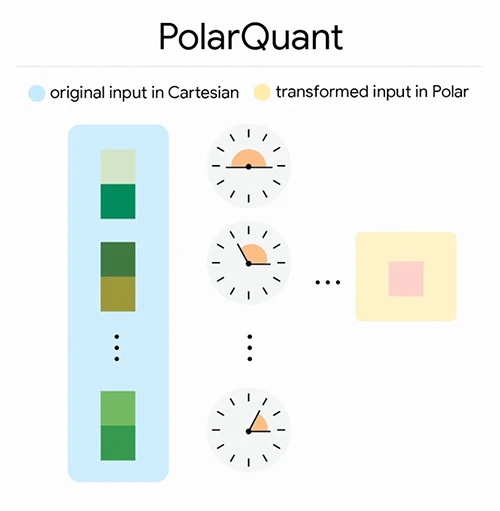

PolarQuant acts as a high-efficiency compression bridge, converting Cartesian inputs into a compact Polar “shorthand” for storage and processing (Source: Google Research)

TurboQuant addresses this by combining two underlying methods. PolarQuant handles the primary compression step by converting standard Cartesian coordinate vectors into polar coordinates. A conventional quantizer records position along each axis independently, requiring normalization steps that vary based on the data. PolarQuant maps pairs of coordinates to a polar system, expressing them as a radius and an angle. Because the angular distribution is predictable and concentrated, the method eliminates the normalization step and the overhead costs it generates.

QJL handles the residual error. Using the Johnson-Lindenstrauss Transform, QJL reduces each remaining vector value to a single sign bit, either positive or negative. This step introduces zero memory overhead. To maintain accuracy despite operating on one-bit representations, QJL uses an estimator that pairs high-precision query vectors with the simplified stored data when computing attention scores.

Google Research describes the combined result directly: “TurboQuant is a compression method that achieves a high reduction in model size with zero accuracy loss, making it ideal for supporting both key-value (KV) cache compression and vector search.”

Benchmark results across five test suites

Google Research evaluated all three algorithms on five long-context benchmarks: LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, and L-Eval. The test models were Gemma and Mistral. TurboQuant compressed KV caches to 3 bits per value without requiring model retraining or fine-tuning, and without measurable accuracy loss across question answering, code generation, and summarization tasks.

Memory reduction reached at least 6x relative to uncompressed KV storage. On NVIDIA H100 GPUs, 4-bit TurboQuant delivered up to an 8x speedup in computing attention logits over 32-bit unquantized keys. PolarQuant performed near-losslessly on needle-in-haystack retrieval tasks.

The algorithms were also evaluated against state-of-the-art vector search baselines, specifically Product Quantization (PQ) and RabbiQ. TurboQuant achieved superior recall ratios on the GloVe dataset (d=200) across top-k retrieval tasks, doing so without the large codebooks and dataset-specific tuning those baseline methods require.

Implications for vector search and inference infrastructure

Security and AI infrastructure teams running large-scale semantic search or LLM inference pipelines carry direct exposure to the memory constraints these algorithms address. KV cache size limits context length in production deployments; compression that preserves output fidelity extends what a given GPU allocation can support. For vector search workloads used in threat intelligence retrieval, document similarity, and anomaly detection systems, techniques that reduce index memory without sacrificing recall directly affect query throughput at scale.

Google Research notes that TurboQuant operates in a data-oblivious manner, meaning it requires no dataset-specific calibration. This property simplifies integration into inference systems and reduces the preprocessing pipeline needed before deployment.

The research team credits the theoretical grounding of the algorithms as a key factor in their reliability. TurboQuant and its component methods operate near known theoretical lower bounds for quantization distortion, a property the authors say makes them dependable for large-scale, production-grade systems.